Build an AI inventory that can be assessed against top risks and threats for AI usage, providing your organization with a complete security posture. A security posture is essential to understand the current state of AI adoption within your organization. With FireTail’s AI Security Posture Management solution, you gain full visibility into your AI usage, assess risks across models and agents, and safeguard your organisation from hidden threats.

Effective AI Security Posture Management is a key tool in enabling an organization to adopt AI in any meaningful way. Having a view on what you have, and the specific problems posed by each resource, app, or AI usage is key to understanding the risk of each AI instance.

With single pane of glass visibility of all your AI usage, right across your entire organization, you can define and develop the policies needed to analyze what AI usage is acceptable, and what AI usage falls outside corporate governance boundaries.

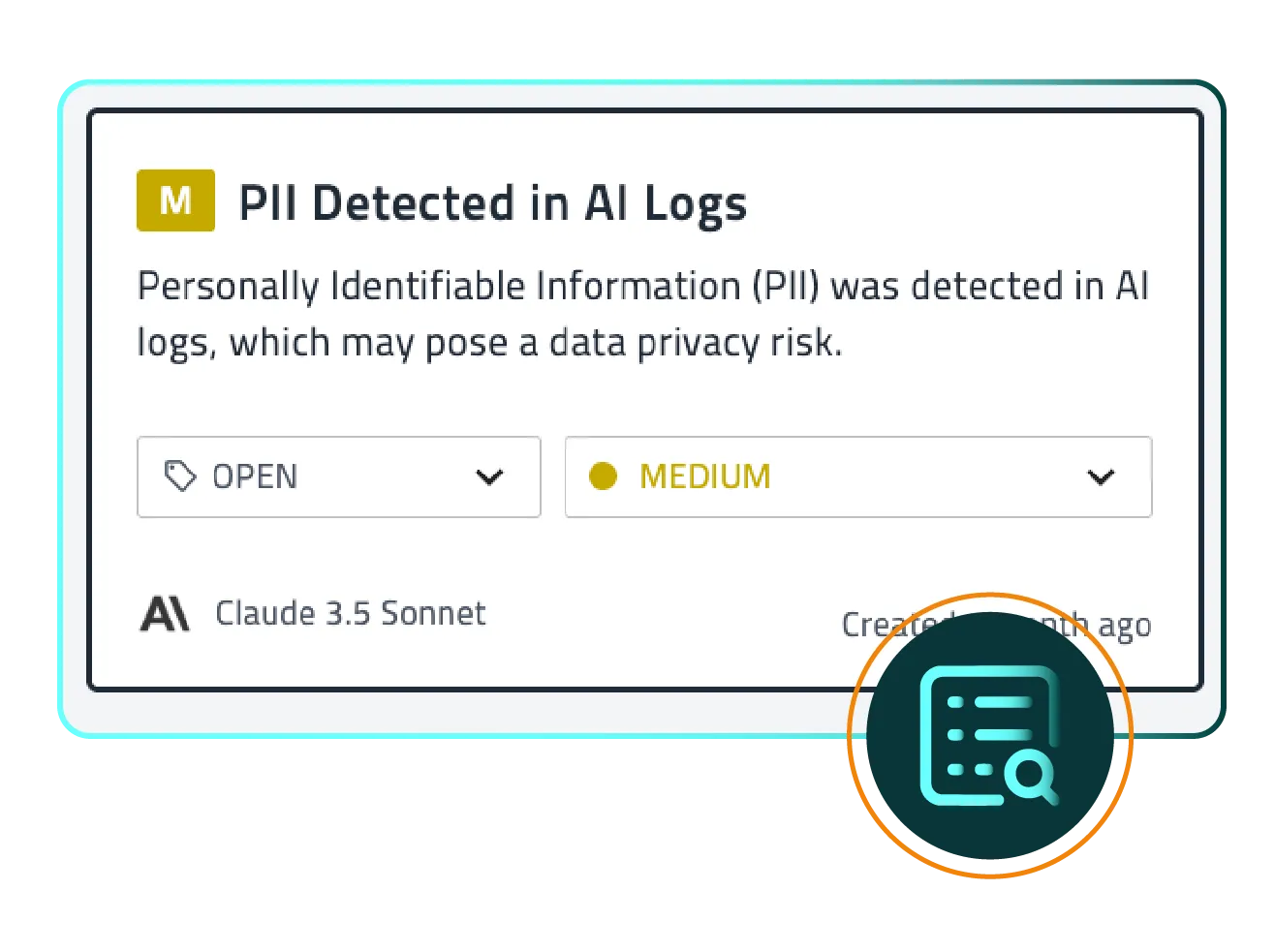

Protect your organization from regulatory risks without stifling AI innovation. Many types of data are considered PII, or have compliance requirements like GDPR and CCPA associated with them. Lacking a security posture can put that compliance at risk.

FireTail provides a clear, continuously updated view of your AI data flows and risk posture, helping you proactively communicate and demonstrate responsible data usage. Customer trust is not earned just once; it is maintained through visibility, accountability, and action.

App Security Director @ Asian MedTech

Get Started

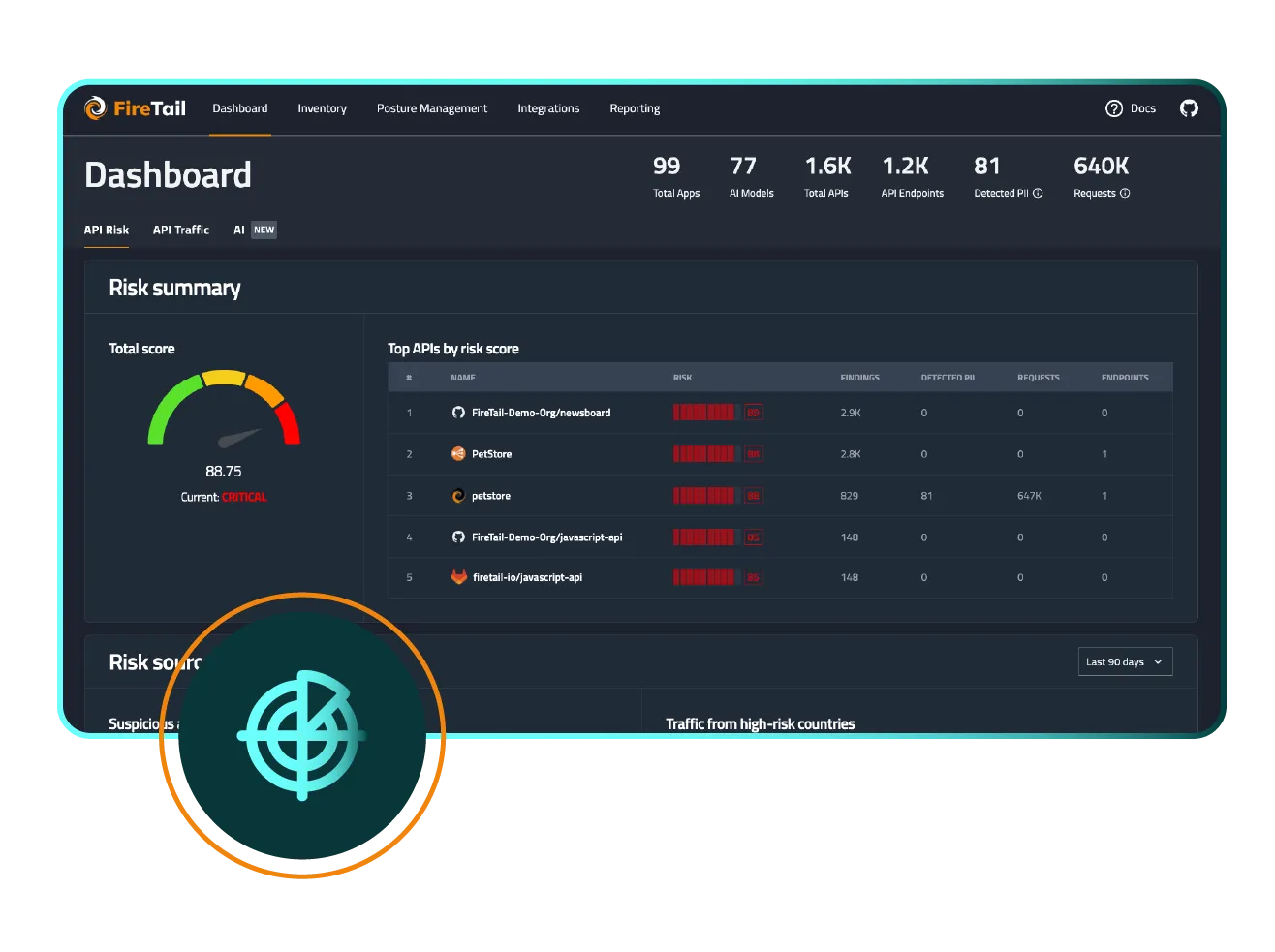

Security leaders are often asked the question “What risks do we have?” with any technology platform. The growth rates, intense data usage and prevalence of shadow AI make AI a particular challenge. FireTail helps you answer that question with certainty.

FireTail combines a unique approach of a 3-tier analysis of AI risk:

Having a strong and consistent AI security posture allows your organization to move forward with AI adoption, giving security leaders and business users peace of mind. Risks can be appropriately identified and managed.

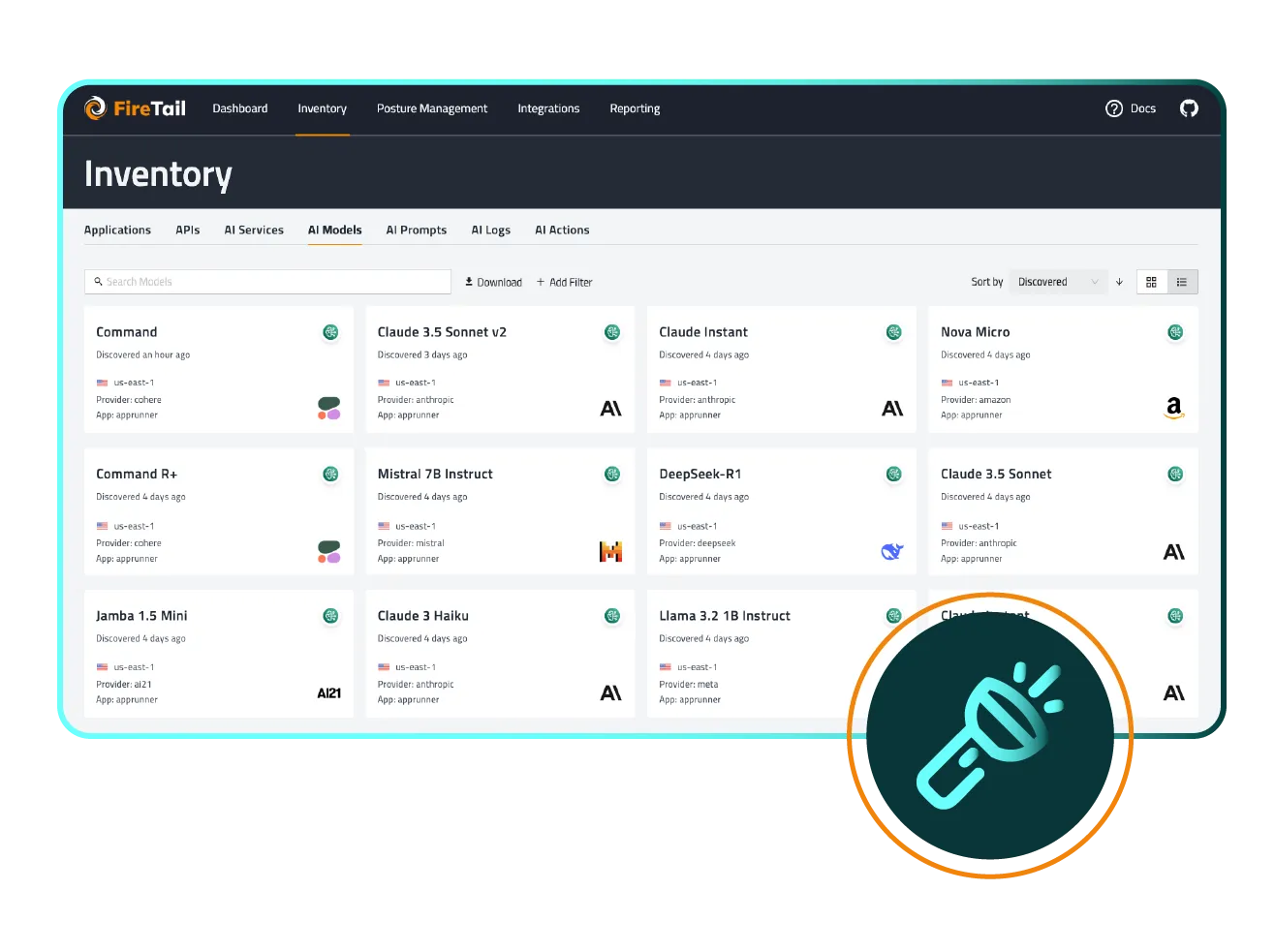

Your AI posture starts with visibility. Identify every AI model, agent, data stream, integration, or shadow AI activity across your environment. Without a full inventory, unseen risks go unmanaged.

FireTail automatically scan and inventory all AI activity, from embedded LLMs to unchecked shadow AI, helping you build a complete map of your AI landscape.

Once you know where AI is being used, it’s critical to evaluate each asset. Look beyond static vulnerabilities and assess interactions, context, behaviour, and data exposures across your AI lifecycle.

A strong AI security posture isn’t just technical, it requires organisational governance. Define policies for AI usage, enforce role-based access, and monitor usage in production. Guardrails such as output filtering help prevent misuse and ensure safety.

FireTail's contextual governance engine aligns AI usage with business objectives, compliance needs, and operational boundaries

Find answers to common questions about protecting AI securityfor MSPs, MSSPs and MDRs

FireTail uses a "Per User, Per Month" model. To prevent double-charging, we deduplicate unique user identities across all workspace, browser, and endpoint signals.

This term refers to assessing the security, governance and operational risks specifically associated with autonomous or semi-autonomous AI agents operating within an organisation. It’s increasingly relevant as organisations deploy AI bots, assistants and agents.

To ensure you can maintain a high-margin service line, FireTail uses a unique identity primary key (Email or IDP ID) for all billing.This means a single individual counts as exactly one billable user, regardless of whether they generate activity through a browser extension, a workspace integration, or an endpoint agent concurrently.Our platform deduplicates these signals automatically, providing you with a clear, predictable cost structure to present to your clients.

FireTail moves beyond "just security" by providing tangible business intelligence that validates a client's AI investment.You can provide concrete reports on which teams are using AI and which tools they prefer, helping clients identify "shelfware" in expensive license pools like Microsoft Copilot or ChatGPT EnterpriseAdditionally, the platform identifies he power users within the client's organization who can lead internal training and drive ROI from AI adoption.

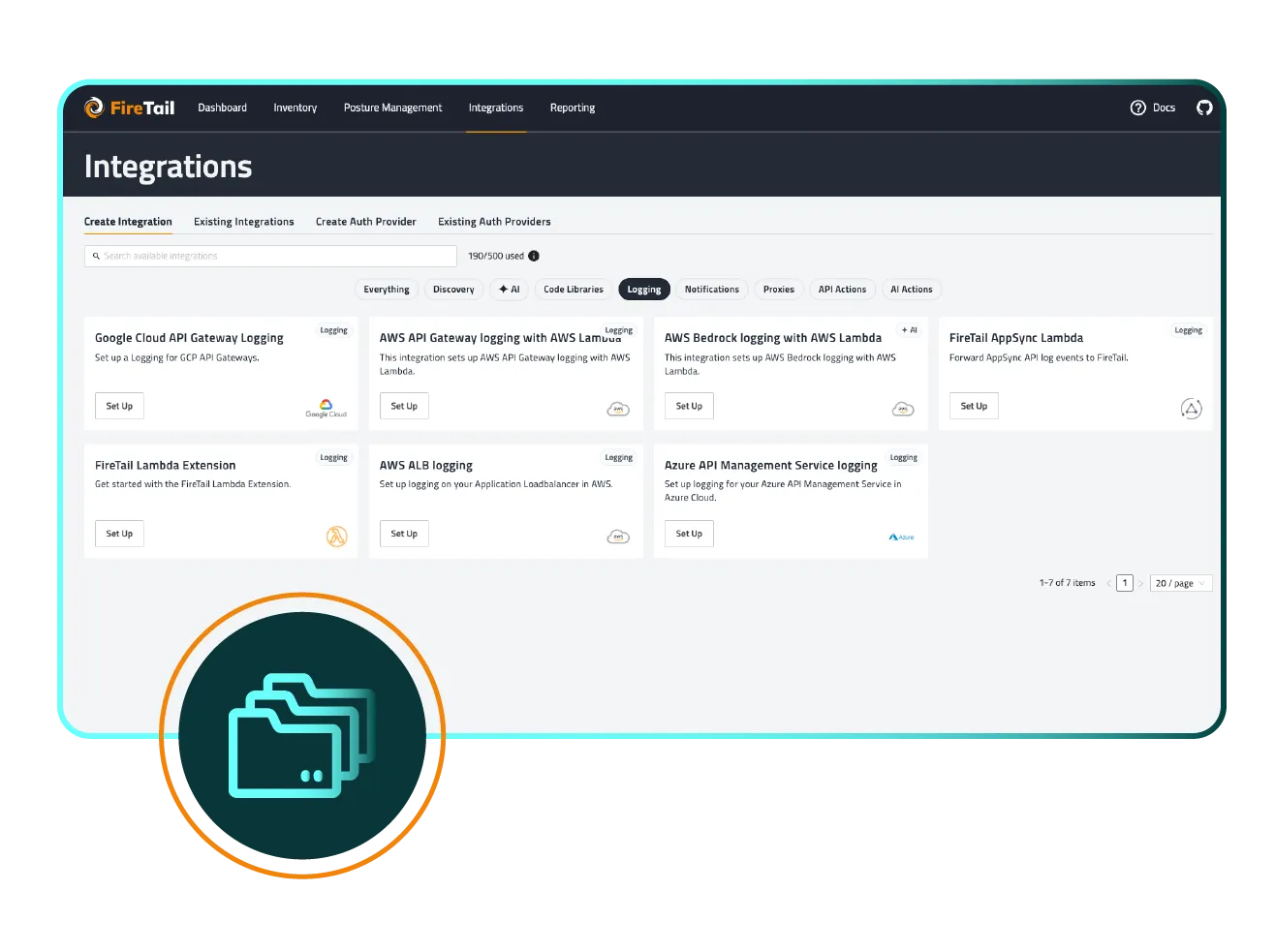

FireTail ensures complete AI visibility by consolidating three primary signal sources into a single, multi-tenant dashboard: Workspace, Browser, and Endpoint .

- Workspace Integrations: FireTail captures sign-ups, SSO activity, and tool usage across Google Workspace and Microsoft 365.

- Managed Browser Extension: A lightweight extension for Chromium-based browsers monitors tool usage, requests, and responses in real-time.

- Endpoint Agent: For total coverage, a dedicated agent tracks desktop AI usage and MCP requests across Windows, macOS, and Linux environments.

This multi-layered approach enables continuous AI discovery, allowing organizations to identify every AI model, agent, and shadow AI activity across their entire environment. By normalizing and analyzing logs from these sources, FireTail builds a comprehensive inventory of all AI usage, providing the visibility needed to manage hidden threats and secure the workforce.